Safety on Liaura

A safer digital space starts with the right structure

Liaura reduces risk by changing how the platform works in the first place.

Children are not placed into a public network. They do not chase follower counts. They cannot freely search for strangers, or strangers for them. Their connections are guardian-managed and built around real-world trust.

Liaura is built around one simple idea: children should not have to learn digital life inside adult social networks.

Instead, Liaura gives children a smaller, safer place to practise connection, creativity and communication with people they know, while parents and guardians stay meaningfully involved.

(1)

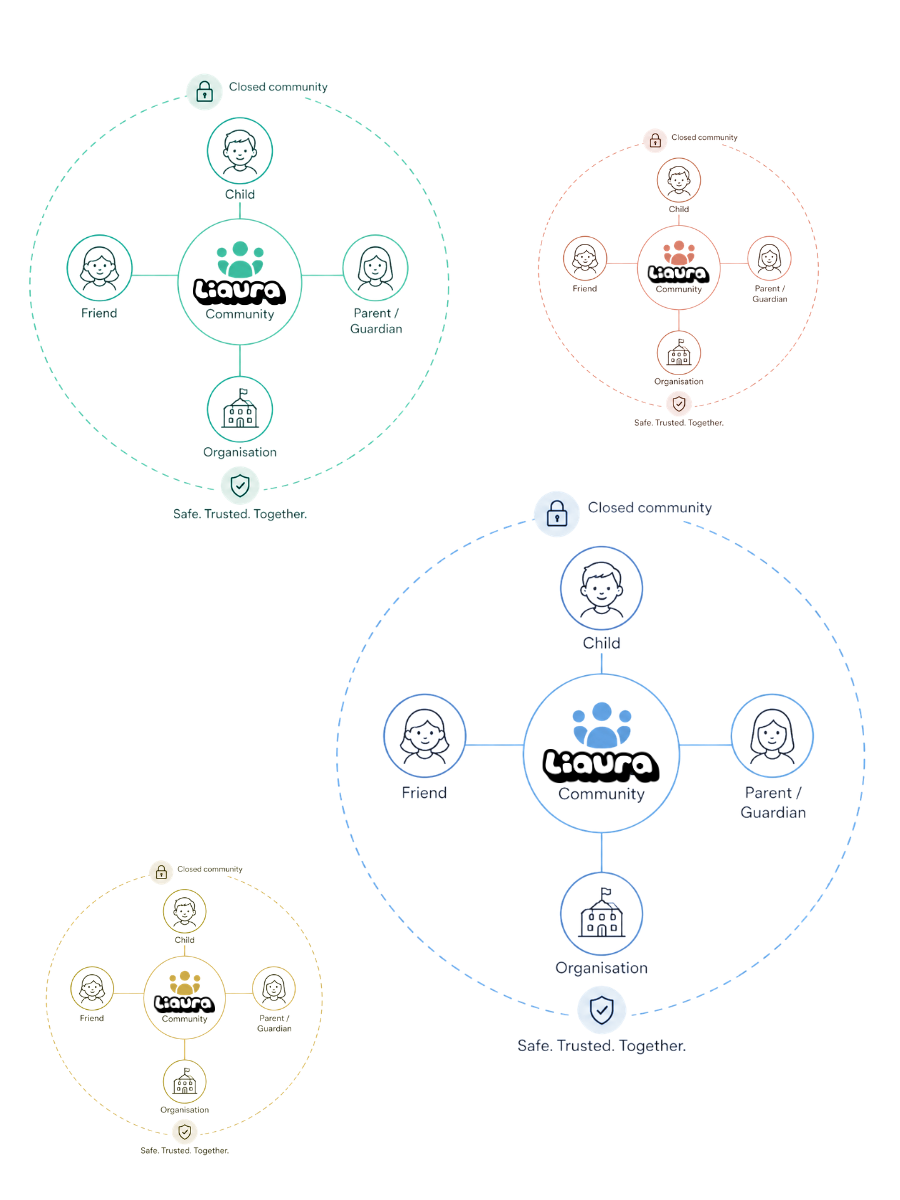

Closed communities

Children connect inside smaller, trusted spaces rather than open public feeds. Liaura is designed around bounded access, so children are not placed into a public social network.

(2)

Friends-only

Children chat and share Moments with people they know, using time-limited friend codes. This helps reduce random discovery and keeps connections grounded in real-world trust.

(3)

Guardian visibility

Parents and guardians stay meaningfully involved, with visibility over their child’s activity, connections and concerns. Children can practise digital independence with adult support nearby.

(4)

Moderation & reports

Reports, technology moderation tools, manual review and community oversight work together to help concerns surface. Our technology blocks harmful content before it gets sent. Reports offer a backup layer of protection to surface any issues. Safety is treated as a layered system, not a single filter.

How friend requests work

Liaura is designed to avoid open stranger discovery.

When a child wants to connect with a friend, the process is built around real-world trust. Time-limited security codes help support safer connections, while guardians remain part of the wider safety structure.

This means children can begin learning how online connection works without being exposed to the risks of open public networks too early.

How moderation works

Liaura uses a layered safety model.

Some concerns may be identified and blocked through auto-moderation tools. Others may be raised through reports from children, parents or communities. Where appropriate, issues can be reviewed manually.

This combination matters because child safety cannot rely on one tool alone. Safer platforms need good design, clear rules, parent involvement, reporting routes, human judgement and technology working together.

What Liaura avoids

Liaura is intentionally designed away from the features that make mainstream social media difficult for children.

No open adult network.

No public follower culture.

No endless public feed.

No pressure to perform for likes.

No stranger-first discovery.

No advertising-led attention loops.

A safer first step into digital life

Children still need to learn how to communicate, make choices, manage friendships and build confidence online.

Liaura gives them a calmer place to practise, with trusted people around them and parents still close by.

Liaura is the middle path: not offline forever, not adult social media too soon.